Visual SLAM Introduction

Definition

SLAM(Simultaneous Localization and Mapping) is a technology that simultaneously performs mapping and location search while scanning the surrounding environment. The map is automatically expanded by measuring the amount of change as the camera moves. MAXST Visual SLAM is optimized for the smartphone environment, unlike the existing other SLAMs, which requires separate hardware equipped with sensors. You can create a virtual map by extracting the point cloud of real environment with just only one RGB camera.

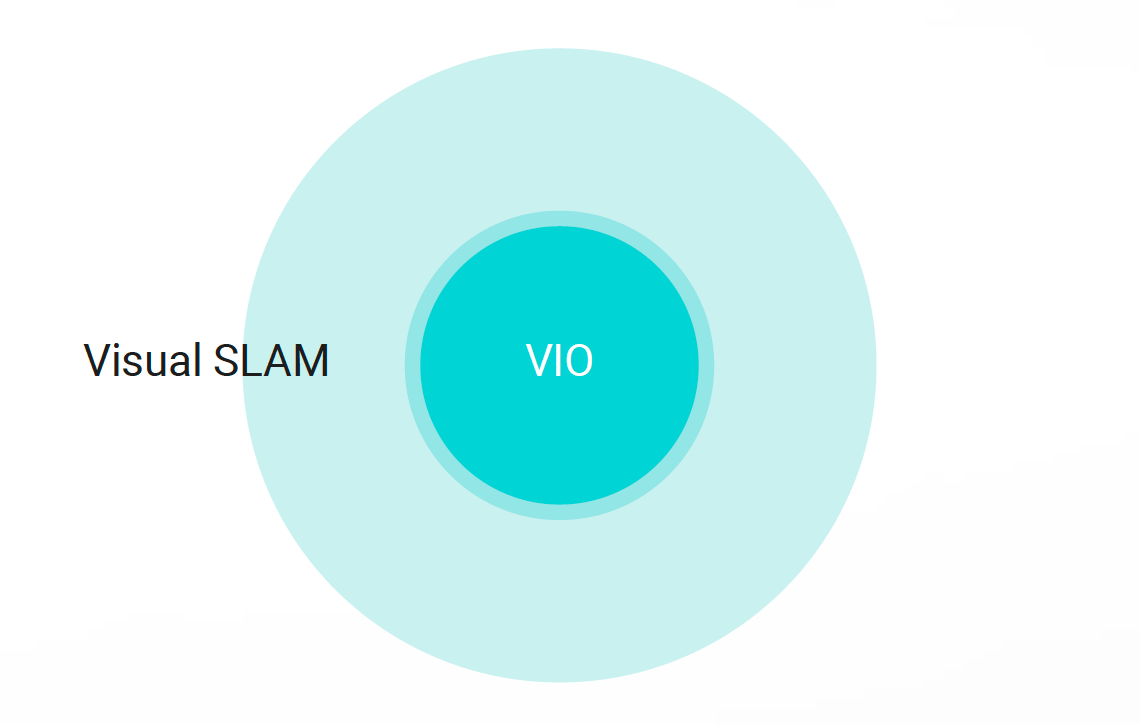

- Visual SLAM = VIO + Loop Closing

Application

It is suitable for sophisticated AR projects as you can reload the saved map files anytime. You can augment contents on trained 3D objects or environment to integrate them into various fields such as assembly, manual, industrial machine tracking or games.

-

MAXST Sensor-fusion SLAM is tightly coupled to an RGB camera with an IMU sensor to simultaneously calculate vision and IMU measurements. Sensor-fusion SLAM will replace MAXST Instant Tracker technology. By combining an AR with an accelerometer and a gyro sensor, it is expected to be widely used in automobiles, drones, HMDs, and optical see-through by providing 6 degrees of freedom pose and point cloud.

Cloud SLAM

MAXST Cloud SLAM technology is currently bringing point cloud from the cloud during the image recognition, however furthermore, MAXST is taking next step to recognize 3D objects with cloud. It will enable to store the 3D map data on the cloud server so that multiple map data can be shared with each other in the cloud to expand the map widely. Ultimately, the goal is to implement Cloud SLAM technology that can house all the real world in the cloud space.